At work, I've recently been facing a two-pronged problem with our server infrastructure. It's been weighing on my mind for a while, and recently I decided that something had to be done. I wasn't sure what I would end up doing, but I needed to act carefully and quickly. These aren't usually two things that go hand-in-hand when planning out IT infrastructure whose life expectency needs to be five or more years!

The first prong of our problem was storage space. To be more specific, we're running out of it. We have a SAN at our HQ location that we use to host two VMware vSphere 4.x instances, along with data for our network monitoring system (OpenNMS) and a few other odds and ends. I calculated that within six months we would be at 100% of the capacity of that SAN without adding any new projects that required storage space. The problem here is that there's a project currently in the works that requires not only the rest of the space in this SAN, but an additional 3x its original capacity! To make matters that much worse, when I projected storage needs out to 18 months, we were beyond the maximum capacity of the SAN in question. If I were to band-aid this problem, it would cost around $10,000 now, and be completely useless in just over a year. So, while $10,000 is an absolute steal when it comes to adding the capacity I wanted to a SAN, I felt like I couldn't justify a $10,000 hit for 12 months of useful service. There's so much more I'd rather do with that money!

Our second piece of the problem was parformance in another realm. We are already running up against the parformance capabilities of the SAN we were looking to upgrade! So this meant that while spending the money to upgrade its data capacity would solve one problem, it would actually make the second problem worse! And the more we rely on this SAN, the worse the performance problem gets. In fact, when I charted out the projected performace of the existing SAN over the next year, it would be so over utilized that everything we ask it to do would take more than 24 hours to complete. This is a pretty severe problem when your business hours are 7am to 7pm, needless to say.

So I went back to the drawing board. I puzzled over several possible solutions, but none of them really made me happy. I could spend $20,000 on a second SAN and use the two of them in parallel, I could steal some capacity from our backup SAN for our customer data, I could just add capacity to the existing SAN and hope the performance calculations were wrong, and of course I could do nothing. The last option was by FAR the best option on the table at the time.

Then it dawned on me. I had experimented with some different servers here, building a "home grown" SAN. Servers were cheap, and I could use any brand of hard drive I wanted. I wasn't locked into a single vendor, and the price tag was extremely appealing. For $10,000 you can build a Linux (or Windows) server with so many disks in it that you actually need specialy hardware to handle them all! I had aready been mulling over the idea of building a small Linux SAN for work, so why not build a massive one instead? I mulled this over for months until the opportunity presented itself. And by that I mean my back was against the storage wall, and a decision had to be made in just a few weeks.

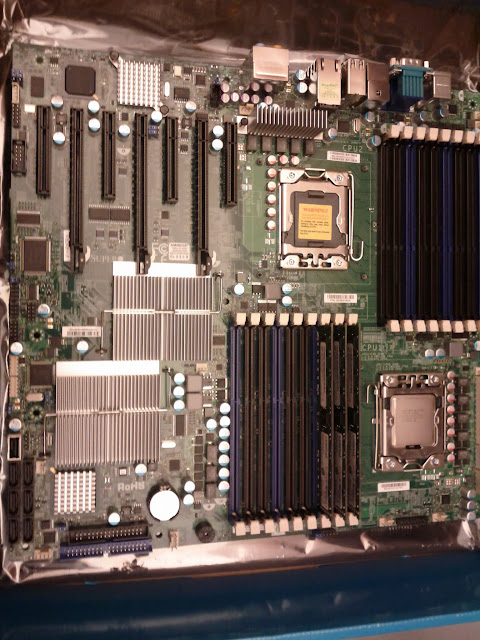

Well, that day has come! While ordering software for my company, I negotiated a deal that saved around $3,500. This afforded me the opportunity to build a Linux server with room for 42 disk drives, a second CPU, more RAM than you could shake a stick at, and all the bells and whistles I wanted. This new system will give me the ability to expand throughput by adding much faster network cards and disk controllers, while allowing me to add affordable storage any time I want! What's better is that I will be modeling my system after ones used at CERN and LLNL- two massive agencies that have some serious storage needs.

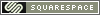

Finally, after months of planning and research, the order has been placed and the parts are on their way. I'll be using 20 1TB hard drives, two or four 60GB SSD drives, 6GB of RAM (initially), a single Intel E5620 Xeon CPU, a 10Gbit ethernet card, and an Areca 24-port 6G SAS card in JBOD mode. The plan is to build a ZFS pool out of the disks, tune it to use the SSDs for data access acceleration, turn on the data de-duplication features, and benchmark the HELL out of it. Once I'm happy with the results, I'll be carving storage out of the pool and migrating things off the old SAN. Once everything is moved off the old SAN, I will add it to the ZFS pool as slower storage used for less frequently accessed data. As more storage is needed, the system will expand with the addition of $1,000 external disk chassis that can hold up to 24 disks each. The total disk limit of a single Areca card is 128 disks, and the system will be physically limited to two cards. I think 256 3+TB drives would be more than enough space to hold us over for the forseeable future.

I plan to keep good notes during this process, and I'm hoping to update this blog with the details as I make progress. I'll also be using the Phoronix benchmark suite to test access to the ZFS pool on the server itself and on iSCSI, CIFS, NFS, and AoE clients, so be prepared for lots of boring numbers.

Sep 22, 2011 at 15:45

Sep 22, 2011 at 15:45  Tom Cameron | Comments Off |

Tom Cameron | Comments Off |